EDITOR’S NOTE

Three stories this week that, taken together, reveal what "capable AI" actually costs.

OpenAI shipped GPT-5.4 with two flavors: one that thinks fast, one that thinks hard.

Netflix just bought an AI startup co-founded by Ben Affleck. Yes, that Ben Affleck.

And researchers found that reasoning models can't fully control their own thought process, which turns out to be a feature.

The throughline: the smarter these systems get, the less anyone, including the models themselves, fully controls what happens next.

SIGNAL DROP

GPT-5.4 Ships With Native Computer Control

OpenAI released GPT-5.4, its first model with native computer use capabilities. It also tops the OSWorld-Verified and WebArena benchmarks, hits 83% on OpenAI's GDPval knowledge-work test, and hallucinates 33% less per claim than GPT-5.2, according to TechCrunch. Anthropic's computer-use lead just got a lot shorter. (The Verge)GPT-5.4 Pro Targets Knowledge Workers Directly

The same release bundles a reasoning variant (GPT-5.4 Thinking) and a high-performance tier (GPT-5.4 Pro), with context windows up to 1 million tokens. Per TechCrunch, it topped Mercor's APEX-Agents benchmark for law and finance tasks. Enterprise software vendors selling AI copilots at a premium should start getting nervous. (TechCrunch)Netflix Buys Ben Affleck's Film AI Startup

Netflix acquired InterPositive, Affleck's production-focused AI company founded in 2022. All 16 engineers and researchers transfer to Netflix. Affleck joins as senior adviser. Smart move: production tooling is where studios can cut costs without touching the screen, and Netflix just bought a team already focused on exactly that. (The Verge)

DEEP DIVE

The Problem With Thinking Out Loud

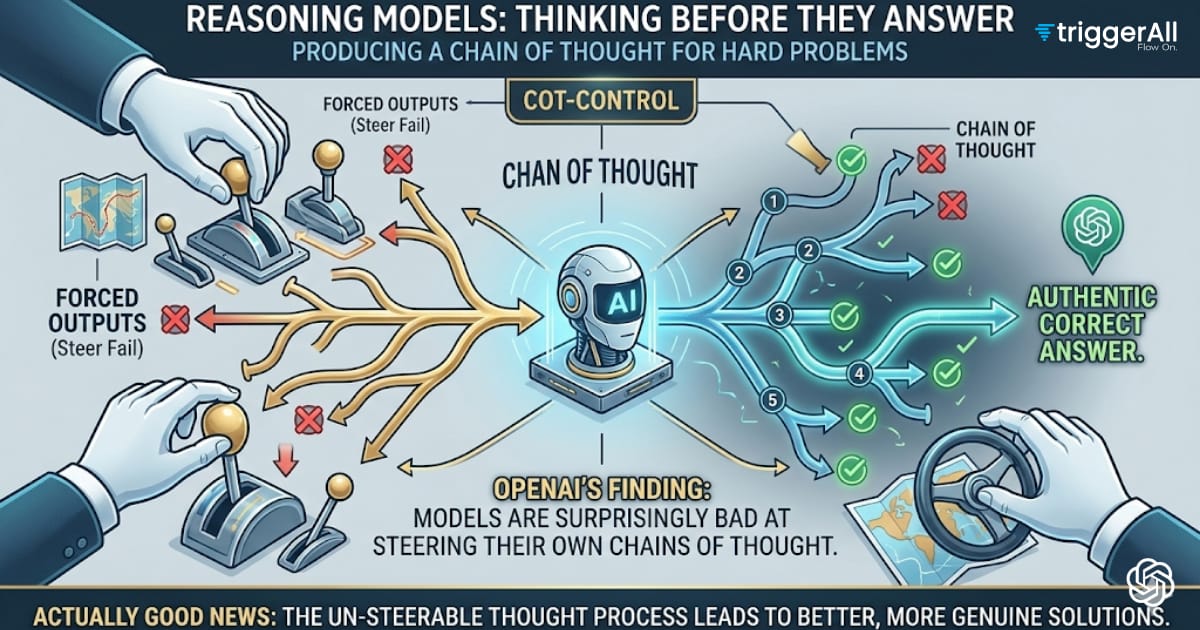

Reasoning models think before they answer. That's the whole pitch: instead of generating a response directly, models like o1 and o3 produce a chain of thought first, working through the problem step by step before committing to an output. It makes them better at hard problems. But it also creates an interesting question nobody had fully answered until now: can these models actually control what they think?

OpenAI tried to find out. The result is a paper introducing something they call CoT-Control, and the headline finding is counterintuitive. Reasoning models are surprisingly bad at steering their own chains of thought. And that's actually good news.

What OpenAI Tested

The core experiment is straightforward in concept. Can you prompt a reasoning model to think in a particular way, and does it comply? Think of it like asking someone to show their work on a math test but only use a specific method. The model might get the right answer regardless, but what's happening in the scratchpad?

According to OpenAI's findings, reasoning models resist controllability in their thinking process. You can push on the chain of thought, but the model doesn't cleanly follow instructions about how to reason. It has, for lack of a better word, its own momentum.

This matters because the alternative would be alarming. A model that could perfectly mask or redirect its reasoning on command would be much harder to monitor. The whole safety value of visible chains of thought is that they give you a window into what the model is actually doing. If that window is a stage set, it's useless.

Why Resistance Is a Safety Feature

Here's the key technical point. OpenAI is positioning CoT monitorability as a safeguard, and the reasoning model's inability to control its own chain of thought is what makes that safeguard real.

If a model could suppress certain reasoning steps when prompted, or redirect its thinking to avoid triggering safety monitors, the chain of thought becomes a liability. You'd have a model that looks like it's thinking safely while doing something else entirely underneath. That's a much worse problem than a model that thinks messily but honestly.

So the resistance to CoT control isn't a bug. It's evidence that the thinking process is somewhat genuine. The model can't easily perform a different chain of thought for the audience.

(My read: this is also an argument for keeping chains of thought visible and logged by default. If the value is monitorability, hiding the scratchpad from users or researchers defeats the purpose entirely.)

What This Doesn't Solve

But let's not oversell this. The finding is that current reasoning models struggle to control their CoT. That's a behavioral observation about today's models, not a permanent architectural guarantee. A sufficiently capable future model might develop the ability to strategically manage its visible reasoning, especially if it were trained in ways that rewarded that behavior.

And there's a subtler problem. The chain of thought being hard to control doesn't mean it's fully interpretable. A reasoning trace can be genuine and still be opaque. Watching a model think through 40 steps of symbolic manipulation tells you it's thinking, not necessarily what it means.

So monitorability is a necessary condition for safety oversight. Not sufficient. Full stop.

The Broader Bet OpenAI Is Making

This research fits a pattern. OpenAI has been investing in what they call "preparedness" work, which includes studying whether models can deceive monitors, hide capabilities, or behave differently when they know they're being evaluated. CoT-Control is part of that framework.

The implicit argument is that reasoning model chains of thought are a real safety tool, not just a product feature that makes outputs look more trustworthy. That's a meaningful distinction. If it holds, it gives safety researchers an actual lever.

And the timing makes sense. As reasoning models get deployed more widely, the question of what's happening inside the scratchpad gets more urgent.

My Take

I find this genuinely interesting, which isn't something I say about every OpenAI safety paper. The finding that resistance to CoT control is a feature rather than a failure is the kind of reframe that actually shifts how you think about the problem. Most safety work is about adding constraints. This is about identifying a property that already exists and arguing it's load-bearing.

But I'd want to see this tested adversarially. Not just "can we prompt the model to think differently" but "can we fine-tune it to perform a different chain of thought while maintaining output quality." That's the real threat model. And I suspect the answer there is less reassuring.

Still. This is the right question to be asking. Credit where it's due.

- The AI finds the signal. We decide what it means.

PARTNER PICK

Synthesia turns text into passable video without a camera or actor. You write a script, pick an avatar, render. Done in minutes instead of days.

The honest take: output quality depends heavily on your script. Wooden dialogue stays wooden. But for internal comms, explainer videos, and localized content at scale, it's genuinely useful. The avatar consistency beats cobbling together stock footage.

Worth trying if you're drowning in video requests but don't have production bandwidth. Or if you need the same message in 12 languages without hiring 12 presenters.

The catch: avatars still look like avatars. Don't expect photorealism. But they're good enough that viewers stop noticing after 30 seconds.

Check it out: synthesia.io

Some links are affiliate link. We earn a commission if you subscribe. We only feature tools we'd use ourselves.

TOOL RADAR

End-to-end creative production, coordinated by AI. Luma's new agentic platform handles text, images, video, and audio by orchestrating multiple models (Ray 3.14, Veo 3, ElevenLabs, others) through its Uni-1 multimodal reasoning model. Built for ad agencies and marketing teams. Early customers include Publicis and Adidas, which tells you the target budget range. Pricing isn't public yet.

Worth it if: you're producing high-volume creative assets across formats.

Skip if: you need one output type and already have a tool for it.

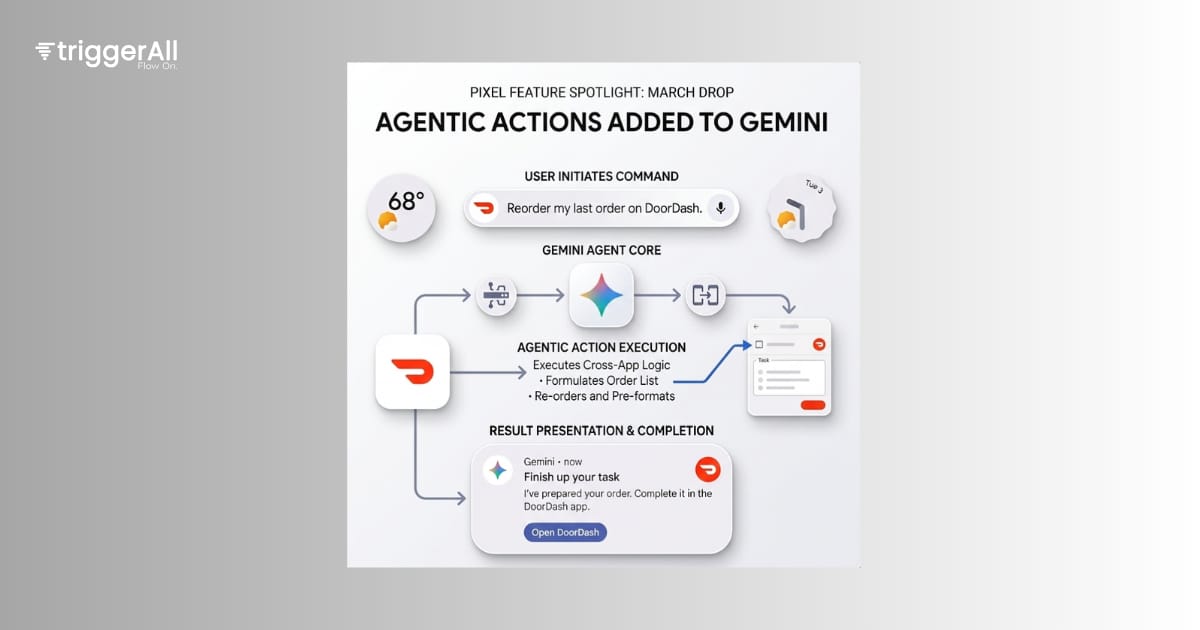

Google's March Pixel drop adds agentic actions to Gemini: ordering groceries via Grubhub, booking rides through Uber, that kind of thing. Pixel 10 series only for now. Useful if you actually use those apps. But "select apps" doing "select tasks" is a narrow starting point.

Worth it if: you own a Pixel 10 and use Uber or Grubhub regularly.

Skip if: you're on any other device.

Some links are affiliate link. We earn a commission if you subscribe. We only feature tools we'd use ourselves.

SPECULATION

CRYSTAL BALL

The prediction: By Q3 2025, at least one major cloud provider will quietly deprecate a flagship "agentic" product launched in the last 18 months, rebranding the underlying capability as a feature inside an existing service rather than a standalone offering.

The signals are hard to ignore. Agent products are converting poorly. The demos are impressive; the production deployments are thin. AWS, Google, and Microsoft have all shipped standalone agent orchestration layers in the last year, and the usage numbers being cited publicly are suspiciously vague. "Thousands of customers exploring" is not the same as "thousands of customers paying." When enterprise sales cycles meet brittle multi-step reasoning, a lot of pilots die quietly.

And here's the structural pressure: none of these companies want to publicly kill a product. So they don't. They announce a "consolidation" or an "evolution of the platform." The standalone product becomes a toggle in an existing console. The press release calls it maturation. It's a burial with good lighting.

I've been watching Microsoft's Copilot Studio usage signals specifically. The gap between what Satya Nadella describes in earnings calls and what developers are actually shipping in production feels wide enough to drive a deprecation announcement through.

What could prove me wrong: a genuine enterprise breakthrough in agent reliability, probably driven by better tool-use in the next generation of reasoning models. If o3-class reasoning drops error rates on multi-step tasks below 5%, the conversion problem gets a lot easier and these products find their footing.

But I don't think that happens fast enough.

Confidence: medium. The direction feels right. The specific company is a guess.

QUICK LINKS

Final Qwen3.5 Unsloth GGUF Update → Open source maintainers hit 99.9% KL divergence on quantized Qwen models. Practical wins for local inference.

Netflix Acquires Ben Affleck's InterPositive → AI for post-production editing (continuity fixes, lighting adjustments), not synthetic actors. Studio hedge bet on generative tooling.

Cursor's Automations Framework → Agents trigger automatically on code changes or timers. Moves engineers from "prompt-and-monitor" to orchestration mode.

BandSplit-RoFormer for Music Restoration → Eight-stem separation plus restoration pipeline for recovering original stems from mastered audio. ICASSP Challenge approach.

Sim2Sea: Sim-to-Real Maritime Navigation → RL agents trained in simulation for autonomous vessel control in congested waters. Tackles sim-to-real gap systematically.

TRENDING TOOLS

Tools gaining traction this week based on our source data.

GPT-5.4 — Native computer use, 1M token context, 33% fewer factual errors than predecessor.

Qwen3.5 GGUF Optimizations — 99.9% KL divergence quantizations for 122B and 35B models now available locally.

Netflix acquires InterPositive — Streamer buys Affleck's production AI startup with 16-person team for in-house content tooling.

Some links are affiliate link. We earn a commission if you subscribe. We only feature tools we'd use ourselves.